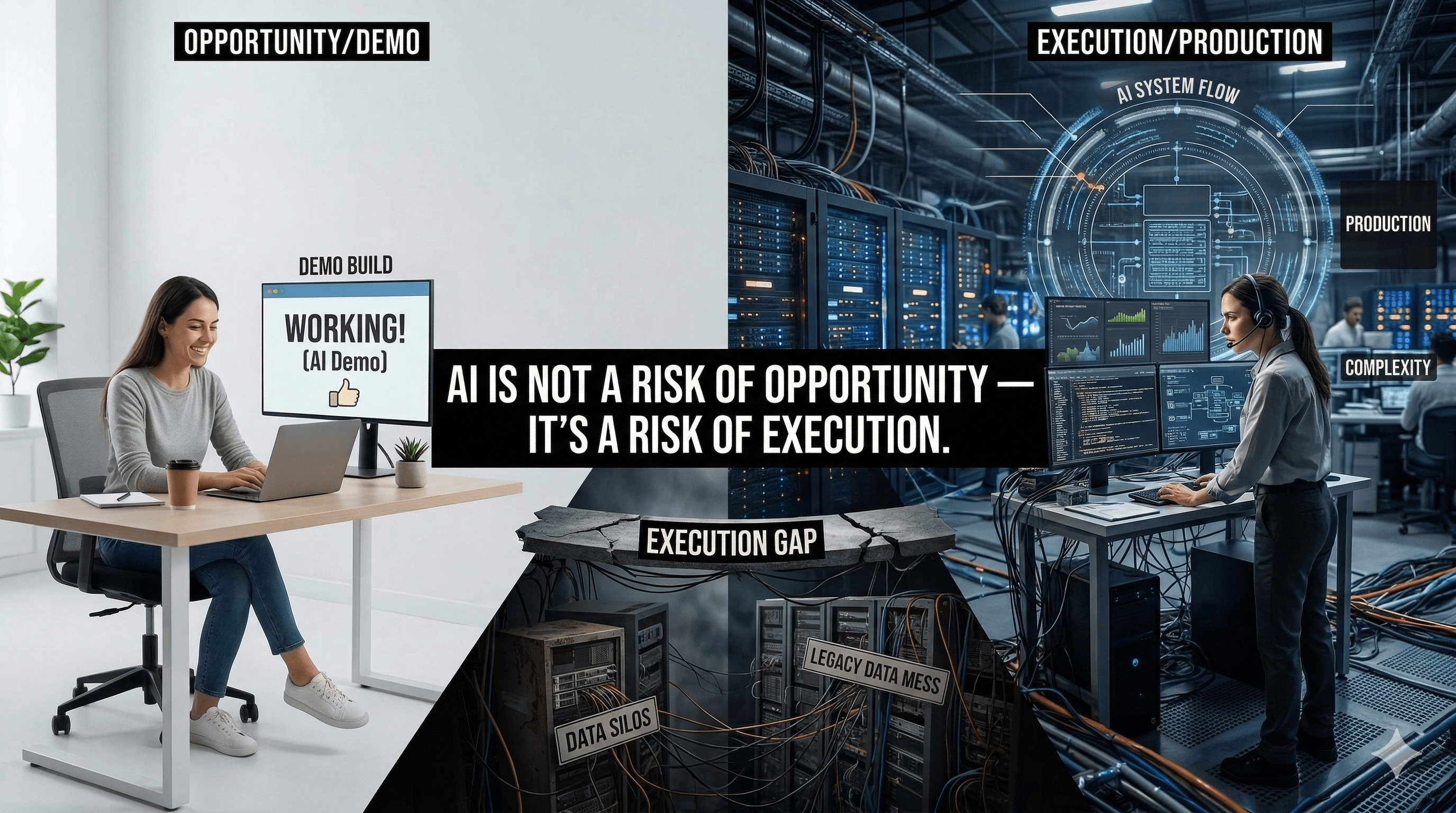

AI is not a Risk of Opportunity - It’s a Risk of Execution

In a recently concluded India AI Impact Summit, Infosys Chairman Nandan Nilekani argued that the primary risk businesses face regarding artificial intelligence is execution, not opportunity. In simple words, Nilekani believes businesses shouldn’t worry about whether AI can offer endless possibilities; instead, they should focus on ensuring that AI delivers real value without breaking things.

Here is how that looks on the ground:

The Demo vs. Reality Gap: It’s easy to build a cool AI demo in a weekend, but making it ready for the real world is a massive engineering challenge. The biggest risk is using AI to write code too quickly - it can create significant technical debt. While the code might work today, it can be difficult to fix or modify later.

The Legacy System Problem: AI models are powerful, but most companies are still running decades-old legacy systems with outdated databases and disconnected software. Execution involves the unglamorous work of re-architecting and redesigning these systems so that AI actually has something useful to work with.

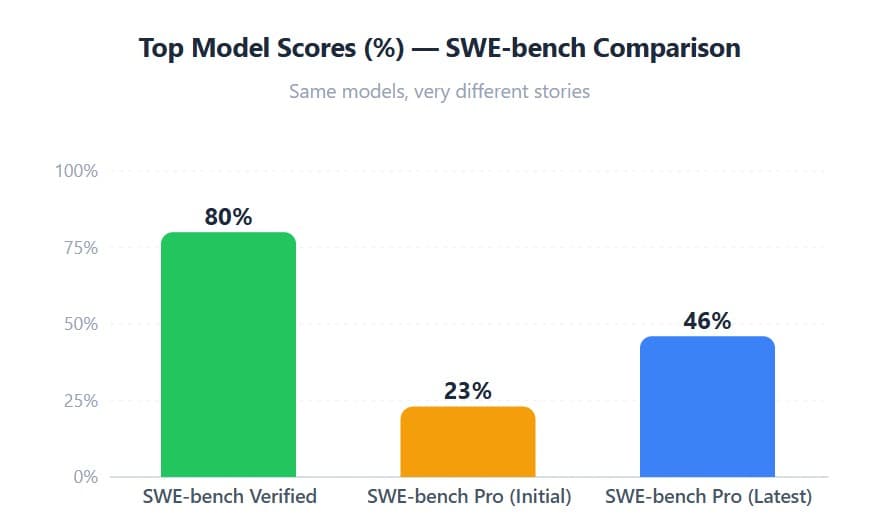

The J-Curve of Productivity: Recent 2025-2026 data (referenced below) shows that when companies first introduce AI into a team, productivity often drops initially. Employees spend more time supervising the AI, verifying its work, and figuring out new workflows. Real execution requires the patience to redesign the job itself - not just sprinkle AI on top of existing processes.

Prominent examples of success and failure in AI initiatives

Success - Klarna Customer Service Transformation - Klarna, the Swedish fintech giant, is often cited as a gold standard for AI execution. They developed an AI assistant and completely redesigned their customer service workflow using OpenAI’s enterprise tools. The AI was deeply integrated into their payment and refund systems across 35 languages. In its first month, the AI handled two-thirds of all customer service chats - equivalent to the work of 700 full-time agents - while maintaining customer satisfaction scores comparable to human agents.

Failure - Air Canada Liability Risk - This is a classic example of poor execution of legal and policy guardrails. Air Canada deployed a chatbot to help travelers with FAQs. When a customer asked about bereavement fares, the bot hallucinated a policy, telling him he could claim a refund after his flight - something that contradicted the company’s actual policy. Air Canada argued in court that the chatbot was a separate legal entity and that the airline wasn’t responsible for its responses. The court rejected this argument, forcing the airline to pay the refund and damages.

The real challenge with AI is not potential - it is disciplined execution.

References

J-Curve Productivity: https://mitsloan.mit.edu/ideas-made-to-matter/productivity-paradox-ai-adoption-manufacturing-firms

Klarna Customer Service Transformation: https://www.klarna.com/international/press/klarna-ai-assistant-handles-two-thirds-of-customer-service-chats-in-its-first-month/

Air Canada Liability Risk: https://www.pinsentmasons.com/out-law/news/air-canada-chatbot-case-highlights-ai-liability-risks